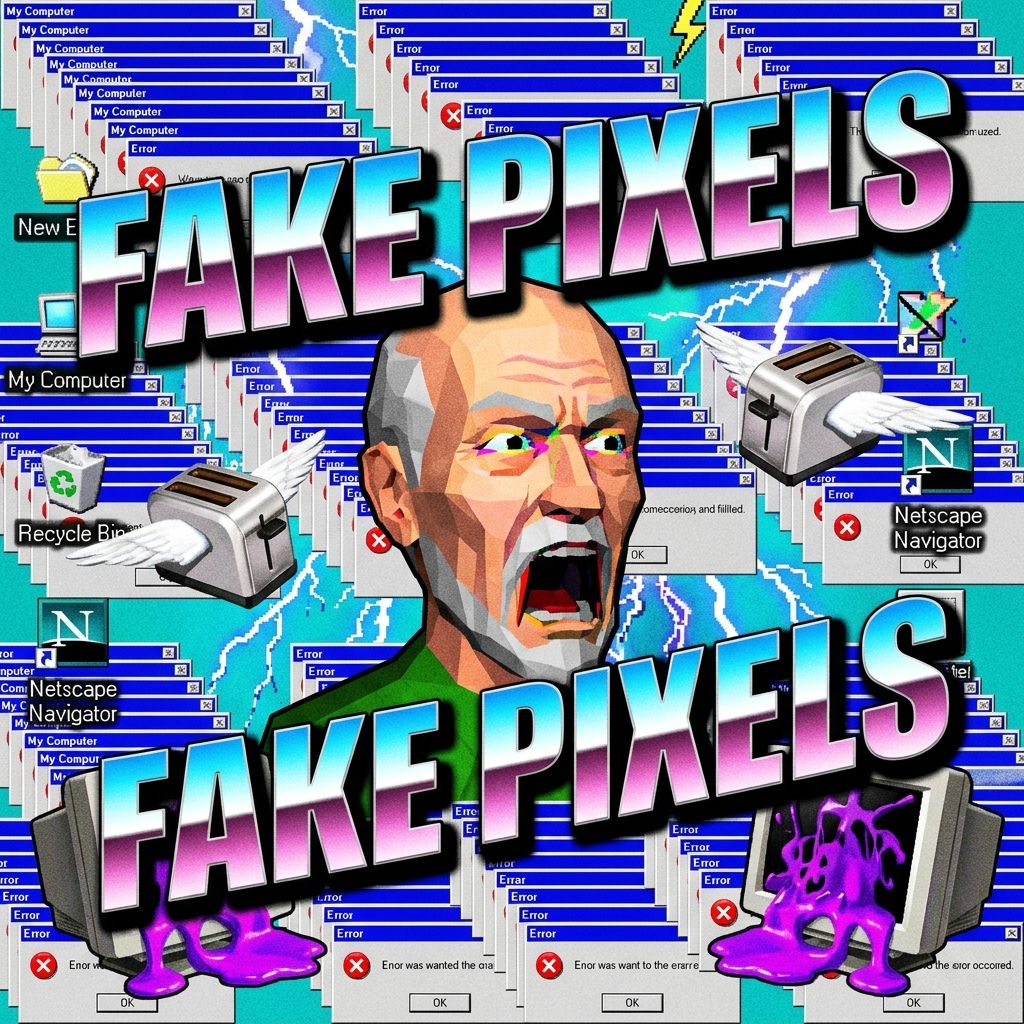

Back When Pixels Were Honest Men

I remember when I bought my first real computer. It was a beige box that weighed as much as a small refrigerator and had less computing power than the digital watch I bought at a gas station last Tuesday. But you know what? Those pixels were honest. If a character in a game was a little brown square, you knew it was a brown square. You didn't need a supercomputer in California to 'generate' a bunch of extra nonsense around it just to make it look like a high-definition photograph. We used our imaginations, for crying out loud! Now, these kids today are up in arms because Nvidia’s new DLSS 5 tech is just making stuff up as it goes along. They call it 'generative AI,' but I call it digital lying. Back in my day, if you lied that much, you were either running for local office or selling used Pintos down on Route 51.

My grandson Tyler tried to explain it to me. He sat me down in front of his glowing neon computer—which looks like a jukebox had a mid-life crisis—and told me that the graphics card is 'hallucinating' extra frames. Hallucinating? In my generation, if you were hallucinating, it meant you’d been spending too much time in a windowless van at a Woodstock tribute concert. Now we’re paying eight hundred dollars for a piece of hardware to do it for us? It’s absolute madness. The gamers are reacting with disgust, and honestly, I haven’t felt this much kinship with the youth since I accidentally liked a post about affordable lawn care on the Facebook.

The Generative Glow-Up Nightmare

The article says this new tech goes way beyond 'upscaling.' It’s doing 'glow-ups.' I thought a glow-up was what my niece did when she finally got her braces off and stopped wearing those baggy flannels, but apparently, it’s when a computer decides that a blade of grass should actually be a detailed 3D model of a fern because the 'neural network' felt like it. It’s like hiring a house painter and coming home to find out he’s decided to paint a mural of a jungle in your hallway because he thought the beige was 'low resolution.' Nobody asked for this! People just want their games to run without the computer catching fire, but Nvidia is too busy playing God with a bunch of silicon chips.

The gamers are saying it looks 'uncanny' and 'disgusting.' I looked at some of the screenshots and it reminded me of that time I tried to use a panoramic camera at the Grand Canyon and my ex-wife’s arm ended up looking like a six-foot-long sourdough baguette. It’s just not right. There’s a certain soul to a pixelated image that you lose when you let an algorithm smear digital Vaseline all over the screen. It’s the same reason I don't like those new-fangled 'smart' refrigerators that tell you when your milk is sour. I have a nose, don't I? I have eyes, don't I? I don't need a robot to tell me what I'm looking at, especially when the robot is clearly on some kind of digital bender.

Conclusion

At the end of the day, I don't need a computer to hallucinate a better world for me. I’ve got my recliner, my evening news, and a pile of old floppy disks that still work if you blow on them hard enough. If Nvidia wants to keep 'generating' reality, they can go right ahead, but they shouldn't be surprised when the rest of us decide we'd rather stick to the pixels we can actually trust. Now, if you'll excuse me, I have to figure out why the microwave is blinking '12:00' and I think the AI might be behind that too.